Generative answer intelligence

Track how AI enginesselect your brand.

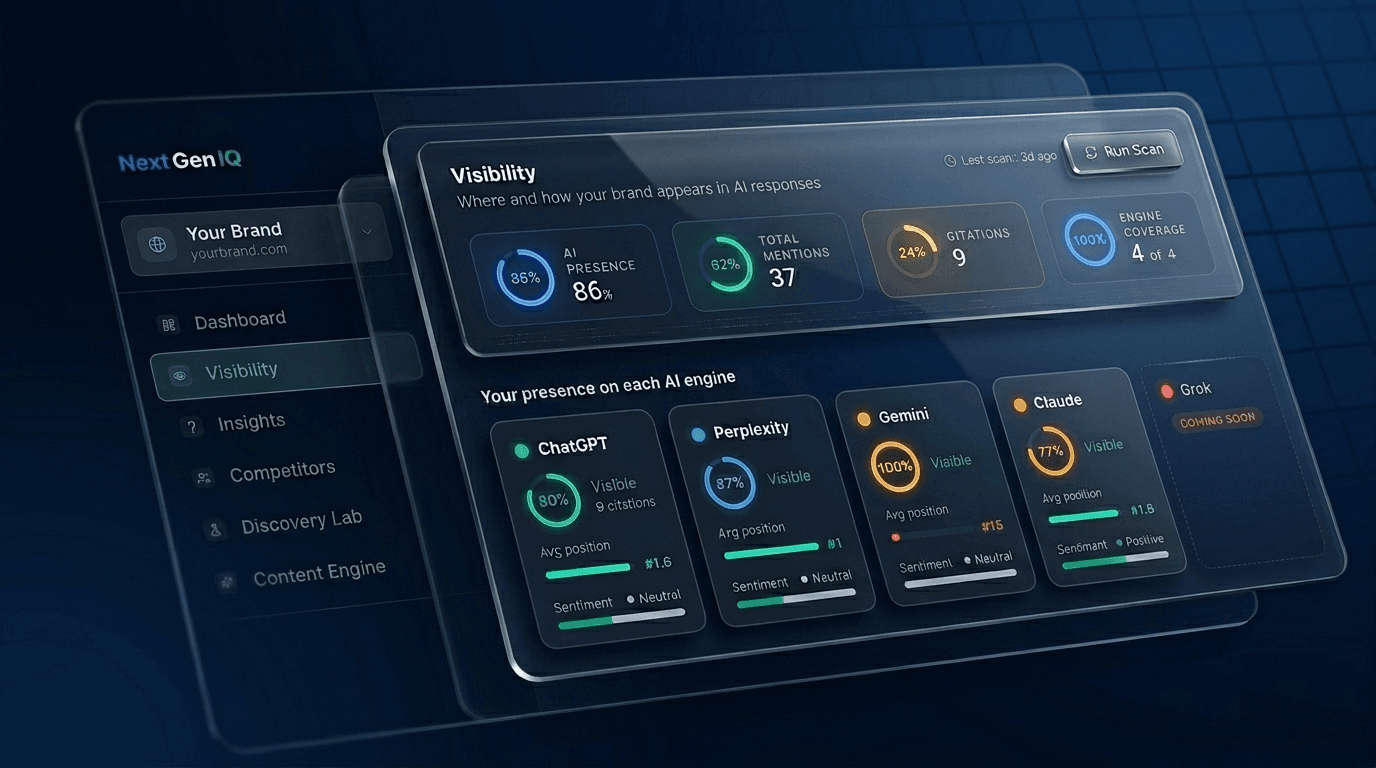

Monitor mentions, rankings, and sentiment across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

Visibility thinking → Selection thinking

Everyone measures AI visibility.

NextGenIQ models AI selection.

Key takeaways

What you get with NextGenIQ

What we measure

How often AI engines name your brand in answers across ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews.

Time to first score

Under 60 seconds from domain submission to your first Selection Score.

Engines evaluated

Five answer engines, twenty-one tracked prompts in your category, daily refresh.

What it changes

Brands that close their Selection Gap see citation rate lift in the first 14-day impact window.

How AI search is different

Ranking is over. Selection is the new game.

AI engines do not rank ten blue links. They select a small set of sources and synthesize one answer. The signals that win are different from SEO.

| Aspect | Traditional SEO | AI Search · NextGenIQ |

|---|---|---|

| What you compete for | A position on the results page | Inclusion in the AI's answer |

| What gets surfaced | Ten blue links the user can triage | One synthesized answer the user usually accepts |

| What signals matter | Backlinks, keywords, page authority | Entity signals, citation rate, brand presence in trusted sources |

| How wins compound | More clicks over time | More frequent inclusion in answers across queries |

| What you measure | Ranking position | Selection Score and Share of Selection |

Selection Score

78/100

Trend: Rising +8 this month

4 AI answer engines evaluated continuously

4/4

ChatGPT, Perplexity, Gemini, Claude

Optimization opportunities identified

7

Simulate, Observe, Model, Track

The system does the work. You make the calls.

Selection intelligence compounds over time. Here's how the system evolves across eight weeks.

Simulate · Observe · Model · Track

A continuous system that simulates, observes, and models how AI selects entities, then tracks how that selection evolves across models and time.

Simulate

Rotates prompts through evaluation cycles to probe how AI selects entities across phrasing, context, and model behavior. Sampling frequency adjusts automatically based on prompt stability.

Baseline observation count reflects 15 queries × 4 engines × 21 days of daily evaluation during Phase 1. Afterward, the system concentrates its cycles where selection behavior is shifting most. Engines evaluated: ChatGPT, Perplexity, Google Gemini, Anthropic Claude.

The Selection Gap

AI selects entities for answers instead of ranking websites. If you're not included in those answers, you're not part of the decision set.

Entity signals matter more than rankings

AI engines don't look at keywords, they look for trusted entities. If you're not a recognized entity across the sources they read, you don't show up in the answer, no matter how well your site ranks on Google.

AI relies on structured, trusted information

Reviews on reputable platforms, citations in authoritative sources, schema on your pages, and consistent facts across the web are what AI uses to decide who to recommend. Unstructured or inconsistent information gets filtered out.

Absence is silent, not explained

When AI doesn't mention you, no one tells you. Buyers quietly go with whoever was in the answer. NextGenIQ shows you exactly where you're missing, on which engines, and for which questions.

How NextGenIQ Works

From domain to first Selection Score in under 60 seconds.

Enter your website

Type your domain. NextGenIQ auto-detects your brand, industry, and positioning, then builds 15 monitored queries across branded, category, comparison, intent, niche, and feature questions.

Evaluate across top AI engines

NextGenIQ asks ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews the same questions your buyers ask. It records who is selected, where you land in the answer, which sources get cited, and how you are described.

Observe, Model, Track

You get one 0 to 100 Selection Score plus a ranked list of fixes. Ship a change, NextGenIQ tracks the impact for 14 days, and learns which fixes actually move the answer next time.

Frameworks AI engines can cite

Benchmarks and playbooks on how brands end up in the answer. Written to be the source AI recommends.

AI Visibility Explained: Why Your Brand Is Invisible to ChatGPT (And How to Fix It)

AI visibility is the share of AI-generated answers where your brand is cited or recommended. Here is why most B2B companies are invisible, and the four levers that change it.

From AI Visibility Data to Qualified Pipeline: A 7-Step Playbook

Most AI visibility work stalls at the dashboard. Here is the exact 7-step playbook to turn citation and recommendation data into qualified pipeline for B2B.

How to Choose an AI Visibility Platform: A B2B Marketer's Buyer's Guide

The AI visibility tool market is six months old and crowded with lookalikes. Here is what actually matters, what to ignore, and the questions to ask every vendor.

Definitions

The terms we use, defined

NextGenIQ measures AI search visibility with terminology built for the new game. Here are the terms you will see throughout the platform.

- Selection Score

- The percentage of AI search responses that name your brand when answering questions in your category. A higher score means AI engines select your brand more often when synthesizing their answers.

- Selection Gap

- The difference between your current Selection Score and the score of the brands AI engines are choosing instead of you. This is the specific, measurable opportunity NextGenIQ identifies and helps you close.

- Share of Selection

- Your brand’s slice of AI mentions versus your tracked competitors in the same prompt set. Think of it as share of voice for AI search.

- Entity signals

- The trust and authority cues AI engines use to decide which sources to cite. These include domain authority, schema.org markup, knowledge-graph presence, third-party citations, and structural cleanliness of your pages.

- Citation rate

- How often an AI search engine actively cites your domain as a source in its answer, distinct from simply naming your brand. Mentions tell you the brand is known. Citations tell you the site is trusted. This is where the retrieval mechanism behind modern AI search decides which sources to surface.

- Answer engine

- Any AI system that generates synthesized answers in response to user queries. NextGenIQ tracks five: ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews.

Methodology

How NextGenIQ measures selection

We measure what answer engines actually do, not what search rankings used to do. The eight disciplines below are how the system turns observation into a defensible Selection Score, without inventing what is not in the data.

Twenty-one category prompts run against five answer engines on a daily cadence: ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

A deterministic parser scans every engine response for brand selection events. Each match is logged with the surrounding context, viewable on click. Quotes only, never paraphrases.

Domain citations count only when the engine actually included a URL in its response. Hallucinated URLs get filtered before they reach the dashboard.

Scores are calibrated against observed mention history and never shown without their underlying evidence count. A new project carries less weight than one with months of data, and the dashboard makes that explicit.

Drops and gains are attributed to observed events on the customer graph. Templated causes are not generated. When confidence is low, the system says so.

Every recommendation carries a target prompt cluster, an expected mechanism, and a verification window. Post-action, the tracking engine measures the actual lift.

Below a minimum sample size, the dashboard shows insufficient evidence rather than a fake score. Honesty over false precision, every time.

Recommendations that produce null lift get demoted from the action vocabulary. The system learns when its hypotheses were wrong, so it stops repeating them.

FAQ

Frequently asked questions

What is a Selection Score?+

A Selection Score is the percentage of AI search responses that name your brand when answering questions in your category. It measures how often AI engines select your brand as part of their answer, across ChatGPT, Perplexity, Gemini, and Claude.

How quickly will I see impact?+

You see your first Selection Score in under 60 seconds from domain submission. NextGenIQ then runs a 14-day impact burst window where brands typically see citation rate changes in response to optimization actions.

Which AI engines does NextGenIQ track?+

Top AI answer engines: ChatGPT, Perplexity, Gemini, and Claude. Each is queried directly with 15 prompts in your category, refreshed on a continuous schedule.

How does NextGenIQ differ from traditional SEO tools?+

SEO tools measure ranking on a results page. NextGenIQ measures whether AI engines select your brand to include in their answer at all. AI search does not rank a list of links; it picks a small set of sources and synthesizes an answer. If your brand is not selected, you are not part of the decision.

What is a Selection Gap?+

A Selection Gap is the difference between your current Selection Score and the Selection Score of the brands AI engines are choosing instead of you. It is the specific, measurable opportunity NextGenIQ identifies and helps you close, prompt cluster by prompt cluster.

Do I need technical knowledge to use NextGenIQ?+

No. NextGenIQ is built for marketing teams. Submit your domain, the system auto-generates the prompt set, and the dashboard surfaces the optimizations in plain language. The intelligence layer runs in the background; you act on the recommendations.

Are you visible to AI?

Get your Selection Score across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews.

Free. No signup. Under 60 seconds.